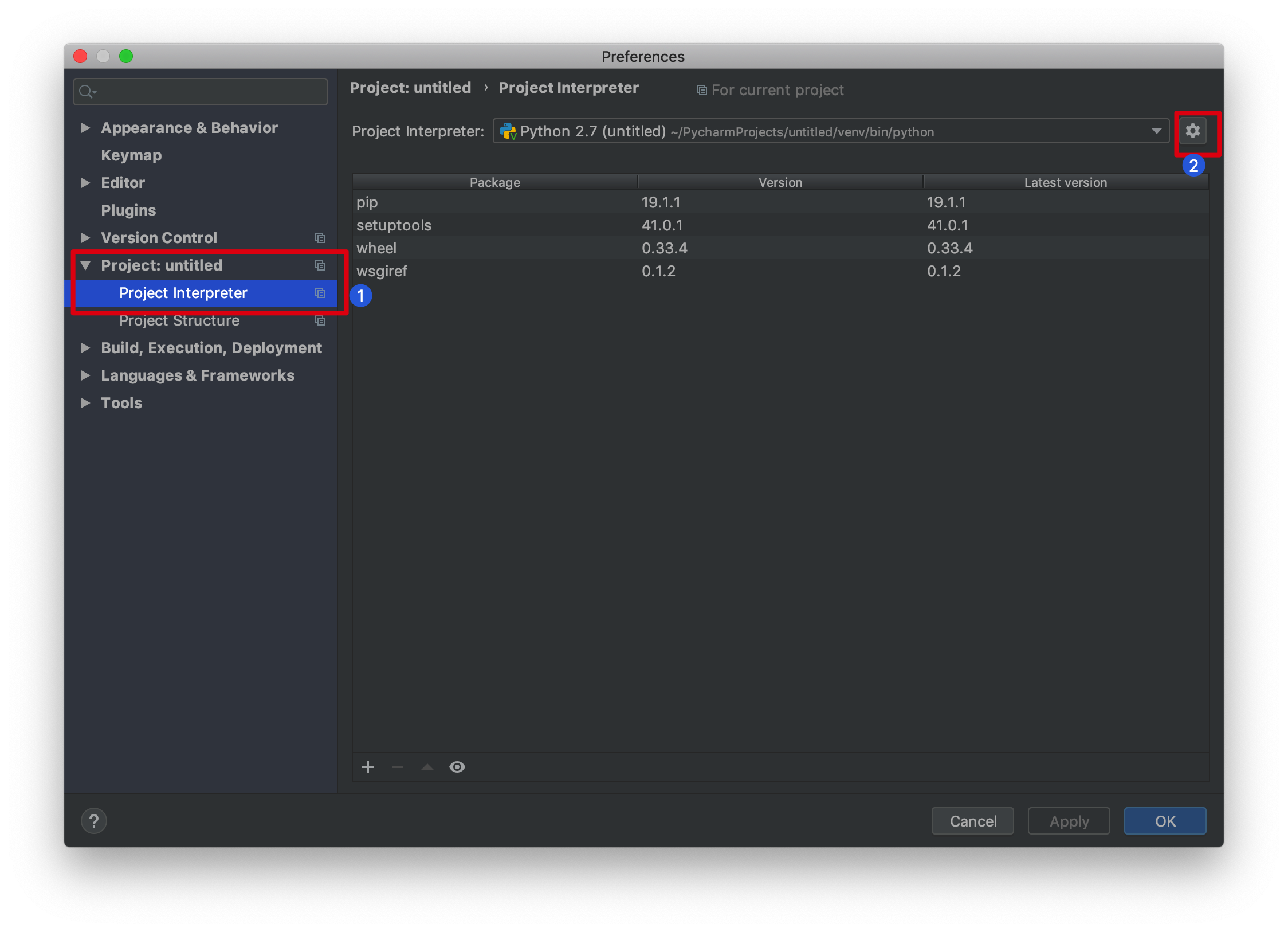

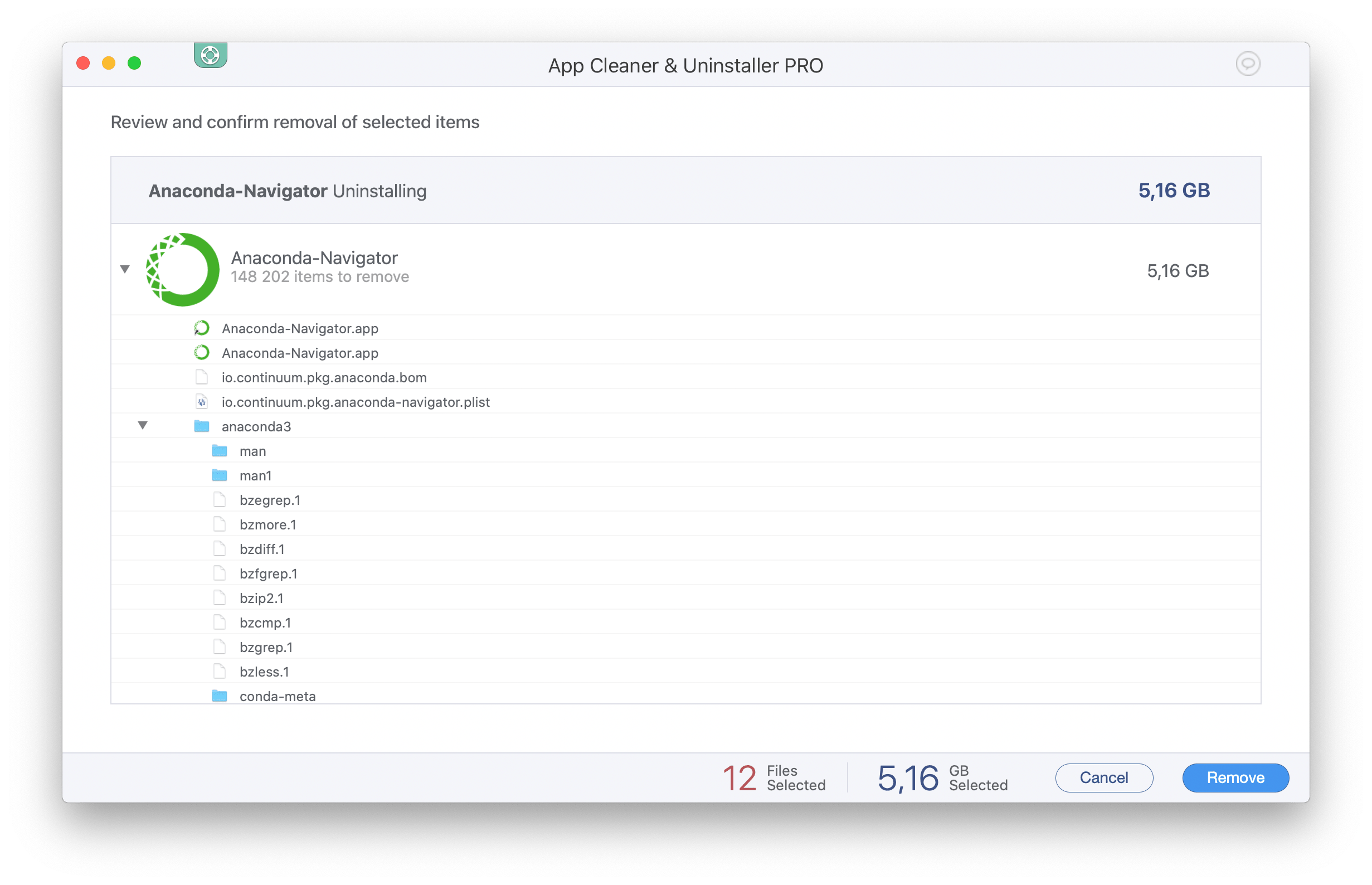

If VS Code needs to be installed, follow the steps in the Mac - Install VSCode documentation. This section of the document assumes VS Code has been installed on the machine. Visual Studio Code needs some configuration to find the default Anaconda Python environment. In the example shown here, this will launch the Mac Terminal with the 'base' Python environment loaded, the beginning of the prompt will show (base) to indicate this: Mac OS may give a security warning about this action.

To do this, launch Anaconda Navigator.įrom Anaconda Navigator, click on the 'Environments' tab on the left:Ĭlick on the Play button and then click on 'Open Terminal': To make management of various environments within Python/Anaconda easier, the Anaconda Navigator offers the ability to manually configure an environment for a specific Terminal (or other program) launch. Launching a specific Python/Anaconda Environment using Anaconda Navigator The beginning of the prompt line in Terminal will show '(base)' (or another Python environment, if applicable). Launch a Mac Terminal window, to do this, hold down the Command ⌘ key on your keyboard and press the Space Bar and type 'Terminal' in the search field, click on the 'Terminal' icon: If Anaconda Navigator detects an available update, it will prompt to install the update, click `Yes to automatically update:Ĭonfirming Terminal has the base Python/Anaconda EnvironmentĪnaconda Navigator will create scripts so that the 'base' environment is always loaded when the Mac Terminal is launched. Initial startup may take some time to complete. To launch Anaconda Navigator, hold down the Command ⌘ key on your keyboard and press the Space Bar and type 'Anaconda' in the search field, click on the 'Anaconda-Navigator' icon:Īnaconda Navigator will launch. If the machine has Anaconda installed, only use conda commands, not pip commands. If Anaconda is installed on a machine, the conda and pip package managers can conflict leading to larger and more complex issues. Your comments might help others.Various online sources will indicate the use of pip to install packages or fix various issues. I have tried my best to layout step-by-step instructions, In case I miss any or you have any issues installing, please comment below. This completes PySpark install in Anaconda, validating PySpark, and running in Jupyter notebook & Spyder IDE. Spark = ('').getOrCreate()ĭf = spark.createDataFrame(data).toDF(*columns)

Post install, write the below program and run it by pressing F5 or by selecting a run button from the menu. If you don’t have Spyder on Anaconda, just install it by selecting Install option from navigator. You might get a warning for second command “ WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform” warning, ignore that for now. Run the below commands to make sure the PySpark is working in Jupyter. If you get pyspark error in jupyter then then run the following commands in the notebook cell to find the PySpark. On Jupyter, each cell is a statement, so you can run each cell independently when there are no dependencies on previous cells. Now select New -> PythonX and enter the below lines and select Run. This opens up Jupyter notebook in the default browser. Post-install, Open Jupyter by selecting Launch button. If you don’t have Jupyter notebook installed on Anaconda, just install it by selecting Install option. Anaconda Navigator is a UI application where you can control the Anaconda packages, environment e.t.c. and for Mac, you can find it from Finder => Applications or from Launchpad. Now open Anaconda Navigator – For windows use the start or by typing Anaconda in search. With the last step, PySpark install is completed in Anaconda and validated the installation by launching PySpark shell and running the sample program now, let’s see how to run a similar PySpark example in Jupyter notebook. Now access from your favorite web browser to access Spark Web UI to monitor your jobs. For more examples on PySpark refer to PySpark Tutorial with Examples. Note that SparkSession 'spark' and SparkContext 'sc' is by default available in PySpark shell.ĭata = Enter the following commands in the PySpark shell in the same order. Let’s create a PySpark DataFrame with some sample data to validate the installation.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed